Create a free profile to get unlimited access to exclusive videos, sweepstakes, and more!

Hang up your apron, because this robot can cook and serve hot dogs

If you imagine a future in which a robot might pull up to your table at a fast-food joint and serve up hot dogs, it could be happening sooner than you think.

Now a Boston University research team was successful in teaching a robot to cook and serve a hot dog. The team pulled it off using reinforcement learning (RL), which trains AI to make decisions by rewarding the correct moves and punishing any that are incorrect. It might sound like a task most humans could do in their sleep, but the robot has to have every step in the system defined clearly before that step is taught, plus operate within safety barriers that need to stay in place unless you want to end up in a scene from the revamp of Child’s Play.

“Experience and prior knowledge shape the way humans make decisions when asked to perform complex tasks,” said Ph.D. student Zachary Serlin and colleagues in a study recently published in Science Robotics. “Conversely, robots have had difficulty incorporating a rich set of prior knowledge when solving complex planning and control problems. In RL, the reward offers an avenue for incorporating prior knowledge."

Making a robot self-aware is what takes it from flailing its metal arms around to doing tasks in a way that is almost human. It sounds easy until you realize that robots don't have the easiest time “remembering” things that will help them make complex decisions in the way our brains process them.

The team had to teach the droid in an untraditional way that involved a programming language that was actually not all that different from simple English. It also had to learn the hard way, because really, what is the most effective way of getting a human to swear they will never do something again?

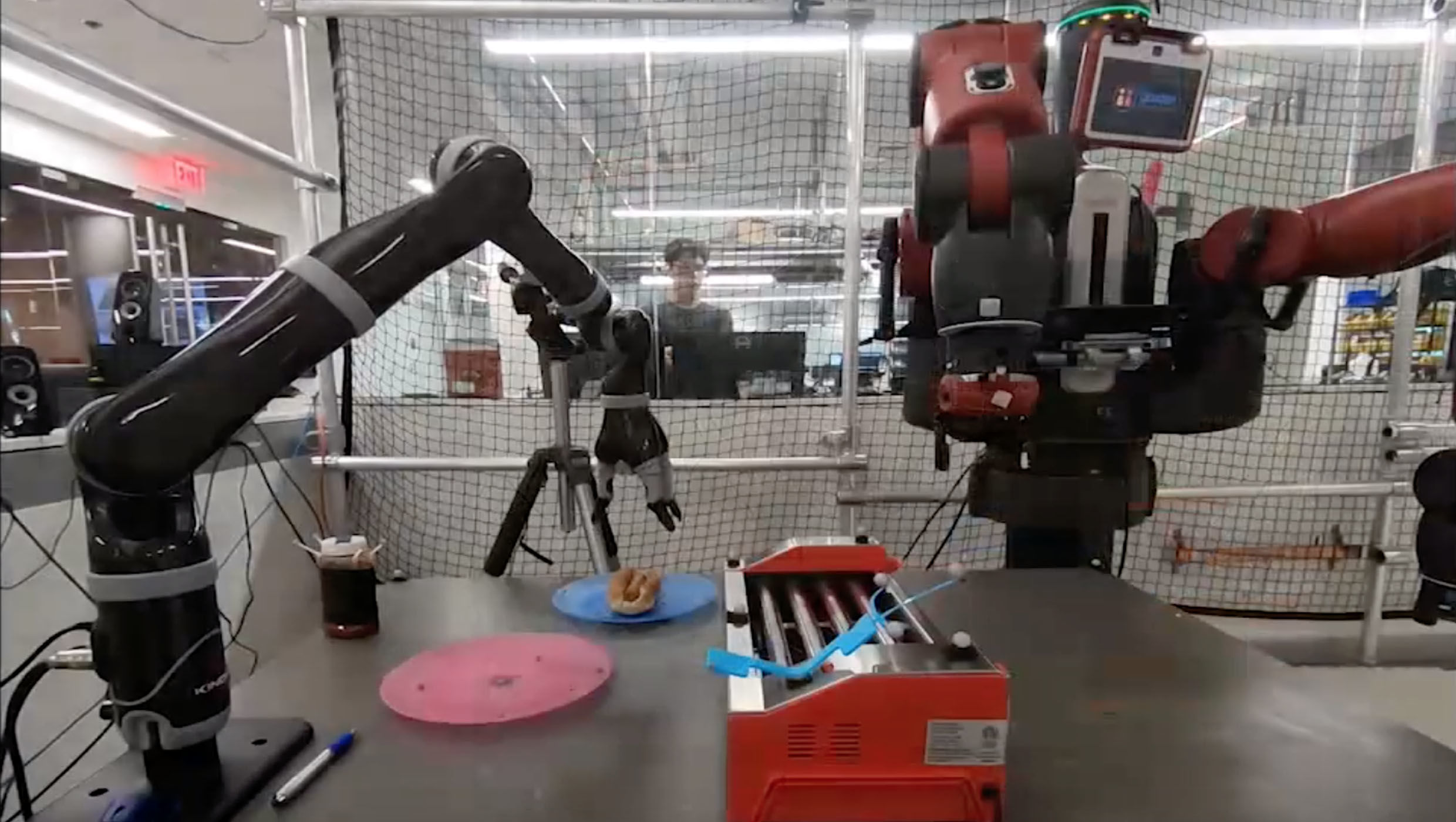

Using a Rethink Robotics Baxter robot equipped with a Kinova Jaco arm, the team set out to see whether what works on man would also influence a machine.

Someone (or something) who has never cooked and served a hot dog before, like the droid in question, has to learn a series of steps to not only do it right, but not do it wrong. It couldn't squeeze the hot dog or the bun too hard. It couldn’t splatter ketchup all over the place. The team would write language that not only gave directions but defined what things like a hot dog and a grill and a bun were so the AI could recognize them. It can get complicated to tell a computer brain exactly what a grill is and then tell it exactly how long to keep the hot dog on that grill. Becoming self-aware through RL helped it realize which actions to take and which to avoid.

“Experimental results suggest that the reward generated from the formal specification is able to guide an RL agent to learn a satisfying policy … the user only needs to interact with the framework using a rich and high-level language,” Serlin said.

So does that mean robots won’t invade the planet if they aren’t trained to do so? Please tell us it does.

(via Science Robotics)