Create a free profile to get unlimited access to exclusive videos, sweepstakes, and more!

Give a hand to this new wearable glove that translates sign language into real speech

Hoping to provide a valuable new tool for the hearing impaired, UCLA bioengineers have created an innovative, wearable glove-like device capable of translating American Sign Language into English speech in real time, via a smartphone app.

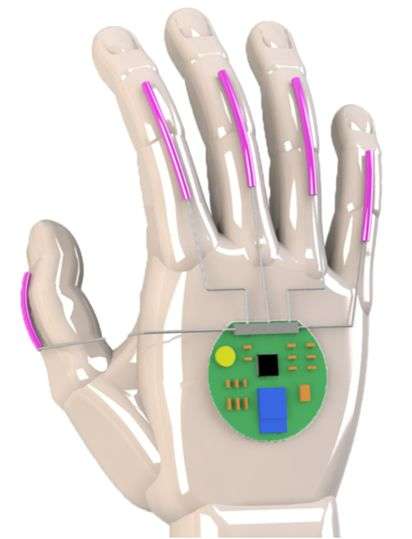

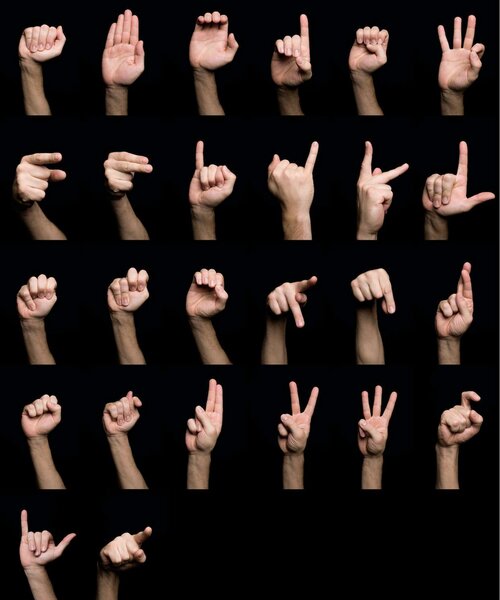

The results of the team's research were published this week in the online journal Nature Electronics. Their unique system employs a pair of customized gloves equipped with thin, stretchable sensors that span the length of each of the five digits. The sensors, which are fabricated from electrically conducting yarns, interpret hand motions and finger positions that represent individual letters, numbers, words, and phrases.

“Our hope is that this opens up an easy way for people who use sign language to communicate directly with non-signers without needing someone else to translate for them,” explained Jun Chen, an assistant professor of bioengineering at the UCLA Samueli School of Engineering and principal investigator on the project. “In addition, we hope it can help more people learn sign language themselves. Previous wearable systems that offered translation from American Sign Language were limited by bulky and heavy device designs or were uncomfortable to wear.”

In 2016, a pair of students from the University of Washington invented a less-sophisticated glove that translated sign language into text or speech, with their invention earning them a $10,000 Lemelson-MIT Student Prize.

For the new UCLA method (as outlined in the video above), finger gestures and articulations are interpreted into electrical signals that are delivered to a thin, coin–sized circuit board strapped to the back of the wrist, where they're transmitted via wireless to a smartphone, which in turn changes the impulses into spoken words at the rate of approximately one word per second.

The device created to aid the deaf community is also fortified by adding adhesive sensors to testers’ faces, between their eyebrows and on the side of their mouths, to assist in certain facial expressions that are an essential component of American Sign Language. The prototype was manufactured using featherweight, inexpensive stretchable polymers and flexible, cost-effective electronic sensors.

Chen, and the paper’s co-authors Zhihao Zhao, Kyle Chen, Songlin Zhang, Yihao Zhou, and Weili Deng, are all members of Chen’s Wearable Bioelectronics Research Group at UCLA. They were assisted by Jin Yang of China’s Chongqing University.

The testing regimen required researchers to work with four people who are hearing impaired and fluent in American Sign Language. Volunteer users repeated each hand gesture 15 times so a unique machine-learning algorithm could accurately recreate these signs into the letters, numbers, and words they correspond to.

Right now, the UCLA invention recognizes 660 signs, including every letter of the alphabet and numbers 0 through 9. However, in order to be used efficiently in real-world situations, the hi-tech glove will need to learn tens of thousands more signs, in addition to the variations and exceptions to the American Sign Language system.

UCLA has already filed for an official patent on the technology and Chen has estimated that it should be at least another five years before this amazing glove will be marketed commercially to the public.