Create a free profile to get unlimited access to exclusive videos, sweepstakes, and more!

JWST is now fully focused

Witness the power of a fully focused and very nearly operational JWST.

The James Webb Space Telescope is now fully focused.

!!

The huge observatory was launched on Dec. 25, 2021 and reached its parking orbit — the L2 point, about 1.5 million kilometers from the Earth in a direction opposite the Sun — on Jan. 24, 2022. While on its way it began deploying its complicated sunshield and mirror arrays. After that the 18 hexagonal mirror segments then began a long phase of adjusting their positions to align them, and then focus them so that star images were crisp.

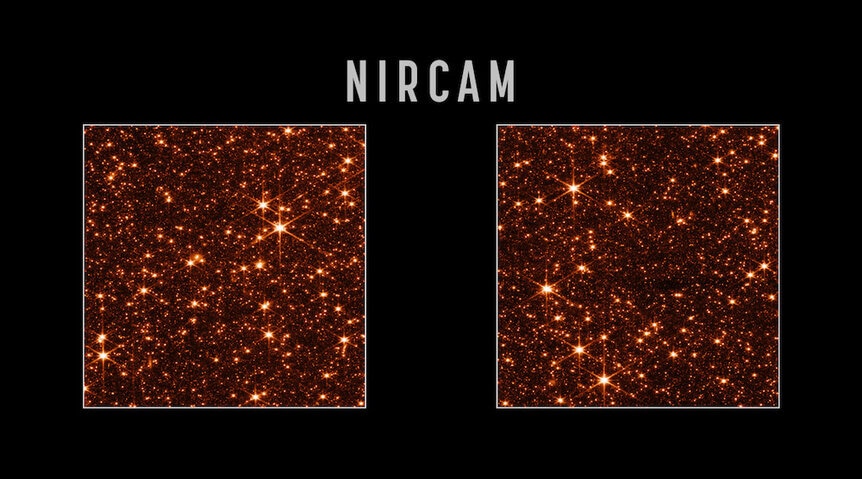

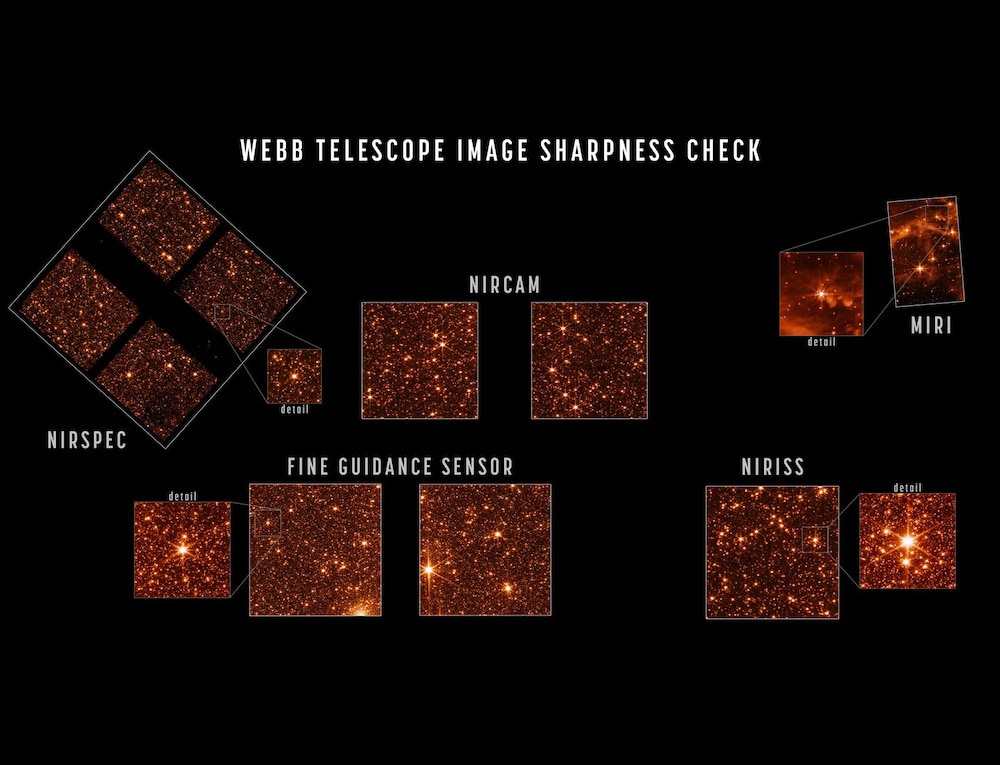

That phase was completed in mid-March 2022. But it wasn’t done yet! All the testing up that point was done using the Near-Infrared Camera, or NIRCAM, one of several scientific instruments on board JWST. It’s the workhorse camera, and the one in the future from which you’ll see most of JWST’s pretty pictures.

But the observatory has four such scientific instruments, each with its own requirements. Once the NIRCAM tests showed that all the mirrors were aligned and focused, it was time to get things working for the other three.

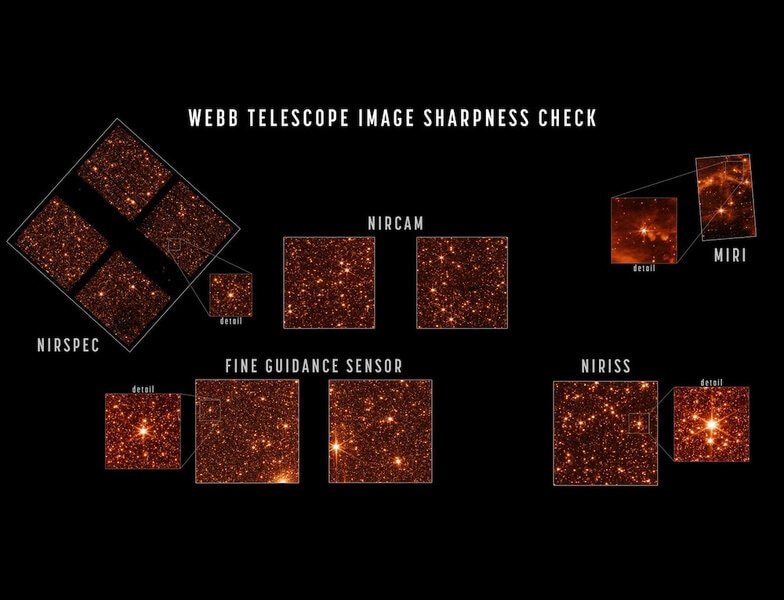

And now, all of them are focused! Even better, as that NASA article states, "The optical performance of the telescope continues to be better than the engineering team’s most optimistic predictions." That's pretty great to see.

To maximize their ability to get stars in each instrument, engineers pointed JWST to the Large Magellanic Cloud, or LMC, a satellite galaxy to the Milky Way about 170,000 light-years from us. It has billions of stars, and that guarantees that thousands will be seen by each detector. As you can see.

NIRCAM is sensitive to wavelengths of light from about 0.6 to 5 microns. The short end is what our eyes see as red light, but we’re only sensitive out to about 0.75 microns or so. Everything past that is infrared. NIRCAM will be used to look at, well, everything. Stars, exoplanets, galaxies near and very far, star-forming gas clouds, solar system planets, brown dwarfs… pretty much anything an astronomer wants to see, NIRCAM can take a look.

It has two detectors which each look at a spot in the sky right next to each other. The field of view is 2.2 arcminutes on a side — an arcminute is an angular measure astronomers use to measure an object’s apparent size, and for comparison the full Moon is about 30 arcminutes across. The smallest thing the human eye can see is about 1 arcminute. NIRCAM’s pixel size will allow it to get high-resolution images, meaning very fine detail.

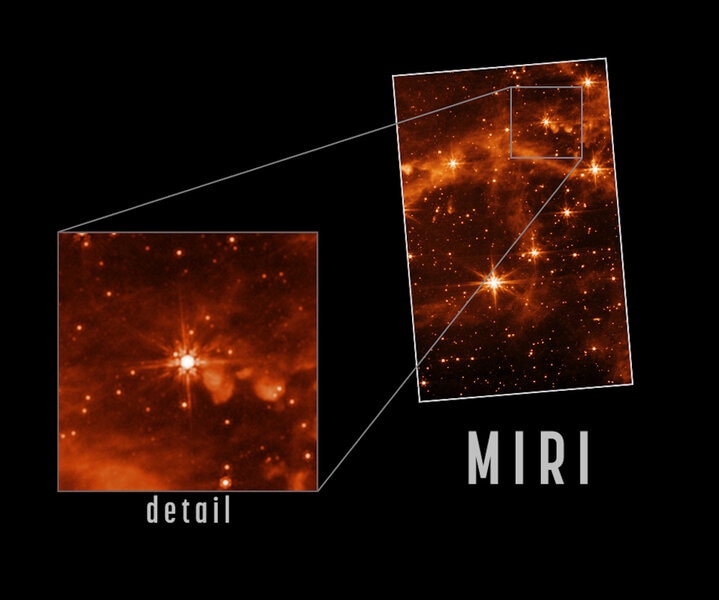

The Mid-Infrared Instrument, or MIRI, can detect much longer wavelengths, from 5 microns out to 28 microns. That makes it more sensitive to colder objects that emit at those wavelengths, including brown dwarfs, dust around other stars and in distant galaxies, and cold icy Trans-Neptunian objects that orbit the Sun.

In the focused image you can see stars in the LMC as well as gas and dust around them; the galaxy is lousy with the building blocks of stars and is very busily making them. The detail in these images will help astronomers understand how stars form from this material, and how this stuff evolves over time.

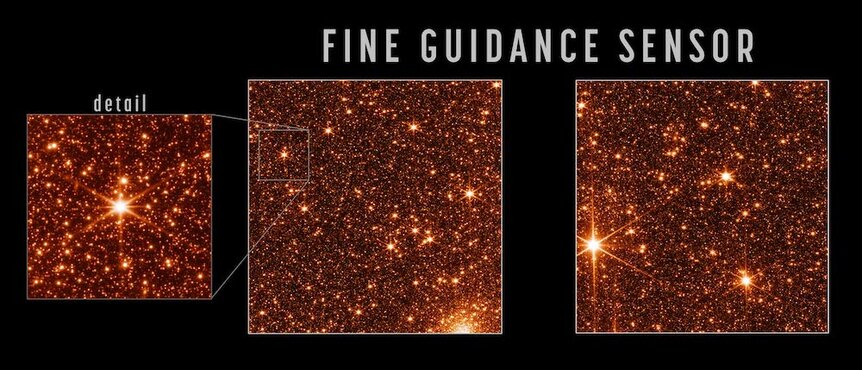

The Fine Guidance Sensor, or FGS, isn’t technically a scientific instrument, but an engineering one: It’s designed to lock on to guide stars and keep the telescope pointed with incredible accuracy on its targets. However, it can be used to make images, as these test shots show. I like the star cluster you can see at the bottom of the left-hand image; the LMC is loaded with clusters and this gives a nice indication of the high quality resolution these cameras afford.

The NIRISS is the Near-Infrared Imager and Slitless Spectrograph, another instrument that is in the same assembly as the FGS. It can take images as well but its main function is to take spectra, breaking up the incoming light into individual wavelengths, to allow astronomers to measure a whole slew of characteristics of astronomical objects, including chemical composition, distance, velocity, temperature, and much more. All the instruments on JWST can do this to one degree or another, but NIRISS and the Near-Infrared Spectrograph, or NIRSPEC, are designed specifically to maximize this potential.

So when will we start to see science images and data? Not for two more months! Why the wait? These are fantastically complicated and complex machines — they cost hundreds of millions of dollars to design, build, and test — each capable of observing the sky in many different ways, and each mode has to be individually tested and calibrated. It’s a painstaking process that needs to be done carefully.

I have some experience here; in 1997 the Space Telescope Imaging Spectrograph (STIS) was installed on Hubble, and I was part of a large team that worked on commissioning it. That took weeks, months really, because STIS had dozens of different ways to look at the sky, and each one had to be tested in turn. I was in the middle of all that, comparing on-orbit observations with data we had collected for years testing it on the ground, making sure nothing was awry and that we understood what our camera was doing.

And that was just one instrument; JWST has five. You may be impatient, but from my perspective getting everything ship-shape and tightened up in two months will be miraculous. A lot of engineers and scientists will be working very long hours every day for those two months, sweating out details to make sure these incredibly sensitive machines work at their absolute optimum abilities.

And then, come July or so, we will all reap the benefits.