Create a free profile to get unlimited access to exclusive videos, sweepstakes, and more!

Big, bright, massive stars were more common when the Universe was young

New study shows big beasts are more rare nowadays.

One of the more irritating aspects of the Universe is that it changes over time.

Oh sure, we owe our very existence to this fact, but it also means that studying the cosmos can be difficult. It’s not all bad: Because it takes time for light to cross the immense distances of space, when we look at very distant objects we see them as they were when they were younger. That’s extremely useful for understanding how the Universe was when it was just getting started, and how it’s changed since then.

However, objects that far away are faint and small, which can make them impossible to study in detail. So one thing astronomers have to do is look around at the local Universe, where things are big and bright and easier to study, and then extrapolate that when looking at the distant, young Universe. This means we assume things then were similar to the way things are now.

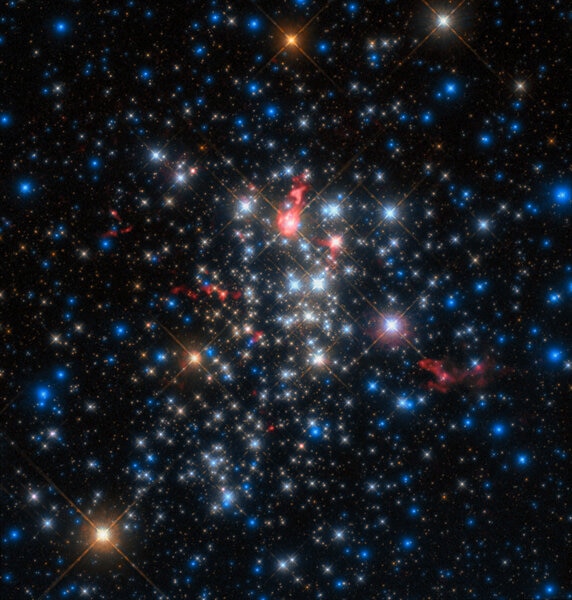

But if objects change over time, this assumption can get you into trouble. A new result just published indicates that this is exactly what’s happening in an important aspect of astrophysics: How stars are born. In this work, scientists show that back in the day, stars were born differently: Overall, more massive stars were more common in the early Universe than they are now. That may not sound like a big deal, but it very much is, because massive stars have an outsize influence on their galaxies, including how many of them explode, how many black holes are made, and more.

What they looked at is something called the initial mass function, which describes how many stars of a given mass are born out of a gas cloud. I’ve written about this before:

If you look at our Milky Way galaxy right now and counted the stars in it, you'd find something like 200 billion, give or take. But they're not all the same: Some are like the Sun, with a few more massive, and very few that are really massive — say, 100 times the mass of the Sun — and a whole lot of dinky ones like red dwarfs with half the Sun's mass or less. In broad terms, 80% of all stars are less massive than the Sun, 10% around the same, and 10% higher.

That's what we call a stellar mass function: how many stars are in a bin of a given mass. But it's actually more complicated than this. Stars change. They're born, they die. High-mass stars don't live long, while lower mass ones live for trillions of years. So that mass function changes over time. There's an initial mass function [or IMF], which is, say, how many stars of what mass are born out of a single giant cloud of gas and dust at the same time. Then that changes over time as stars change.

The idea that there could be a single, universal IMF is tempting, because that would make things easy. However, it’s far more likely that the IMF itself has changed over time due to conditions changing in the Universe itself. For example, the Universe is expanding and cooling, which means in the past it was smaller and warmer. We know that dust — grains of rocky and/or sooty material created when stars die, and which form clouds that also take part in star formation — in galaxies tends to be warmer the farther away we see a galaxy. That higher ambient temperature can definitely affect the way stars are born — a warmer gas can hold itself up against its gravity better, so it takes more mass to make a given star. But is this really the case in the real Universe?

This is what the new work investigated [link to paper]. They looked at a staggering 140,000 galaxies in the COSMOS catalog, which is an immense database of over a million galaxies, cataloguing their various characteristics. Here’s the trick: Massive stars are very bright and blue and don’t live long. Lower mass stars are faint, orange and red, and live a very long time. If you assume some initial mass function for a galaxy, then use models of how stars live and die, you can extrapolate the colors of the galaxies at different times. Then you check the database and see what colors the galaxies actually are.

Until now, astronomers have used a pretty standard IMF to do this kind of work. What’s new is that the team let the IMF itself be a variable, changing it in different ways to see what best fits the actual observations.

What they found is that for distant galaxies, massive stars were born at higher rates than they are now. That’s an outcome of their more general conclusion that the IMF is dependent on dust temperatures being higher in the past.

So why is this important? Massive stars have a huge impact on their environments. For one thing, while alive, they blow out fierce winds of subatomic particles and blast out huge amounts of ultraviolet light, which can erode away their immediate gas and dust cloud — just see the Orion Nebula for evidence of that — and if created in enough numbers can actually affect the galaxy as a whole, changing the ways stars form for tens of thousands of light years.

And when they die… they explode. This can trigger star formation in nearby nebula by collapsing the cloud, or again blow material clean out of the galaxy. And after that they leave around lots of neutron stars and black holes, so there’s that.

Supernovae also create heavy elements like iron and nickel and other fun things that help build terrestrial planets like Earth, populate them with carbon and oxygen, and seed them with elements necessary for life.

So massive stars have a profound effect on their host galaxies. And our Milky Way galaxy was young once, so it too may have had more massive stars in it than we thought. How does this change what we know about it? That remains to be determined. This study looked at distant galaxies, but it may very well impact what we know about our own.