Create a free profile to get unlimited access to exclusive videos, sweepstakes, and more!

Do androids dream of electric fish? Some fish use electricity to sense things from far away

So maybe there weren’t any electric fish starring in that neon fish tank scene from Blade Runner, but there is a reason that androids might dream of them.

While glass knifefish might not be so cyberpunk, they do have electric powers used for sensing movements from their surroundings and the presence of other fish, even from a distance. What they sense can then inform what they are going to do based on that knowledge. NJIT and Johns Hopkins researchers have taken another element from science fiction and used augmented reality (AR) to figure out the link between the movements fish make when sensing things and the sensory feedback they process. This could eventually influence next-gen motion sensing in robots, so the android thing isn’t too far off.

These unreal fish live in the Amazon river basin, which is swimming with predators like piranhas and other gnarly things full of teeth, so they hide out in refuges and stay still to avoid them. They also tend to follow any changes in position of their refuge. If debris at the river bottom sways one way, they go with it. Because the research team knew they would not be able to control the behavior of some fish for the sake of studying the response in others, they decided to see how a fish would respond to sensory changes in its refuge. How a fish responded to changes in the refuge would at least be close to how would respond to the movements of another fish.

When a creature gets sensory information from motions made by something else, this is called active sensing. Sensory signals that result from a sensory organ moving are known as reafferent feedback. The brain compares reafferent motion signals to the expected result of such movement so the animal can adjust to whatever situation it may be facing. If the refuge of a knifefish is moving in a way that might reveal it to predators, it will process that as a need to move with the refuge to stay hidden from any passing piranhas or whatever else might want to bite its head off.

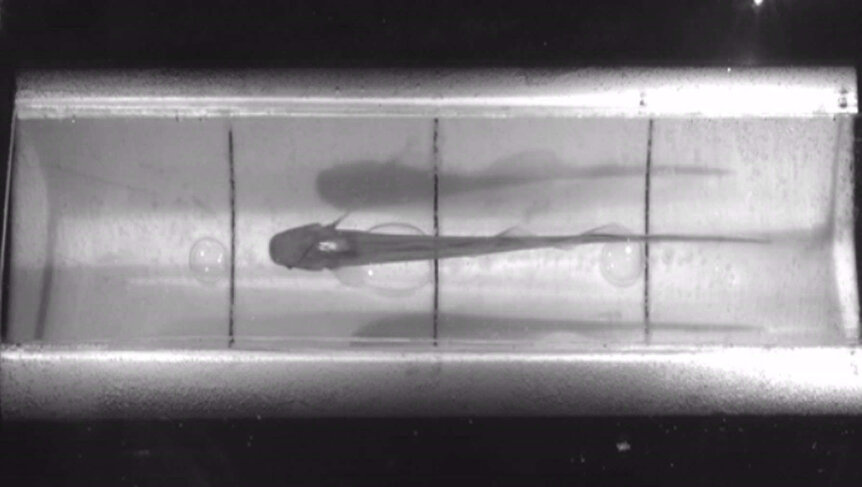

“To investigate the control of movements for active sensing, we created an experimental apparatus for freely swimming weakly electric fish…that modulates the gain of reafferent feedback by adjusting the position of a refuge based on real-time videographic measurements of fish position,” said NJIT biologist and associate professor Eric Fortune, who led a study published in Current Biology.

The researchers hypothesized that reafferent feedback would affect the movements of a fish because of active sensing. They predicted if its refuge changed in any way, the fish would be onto those sensory signals right away and adapt accordingly. As they tried to cover themselves up in refuges created out of plastic scraps, the refuges were moved around to test how well the fish would react. Turned out that the fish were right on when they sensed things were being shifted here and there.

Depending on how much they need to adapt after someone altered their surroundings, back-and-forth movements that were a result of active sensing would spike or plummet in order for them to stay hidden. How the fish reacted suggested that they have a dual control system. One feedback control system is for active sensing, so the fish will receive the sensory cues from changes in its refuge, while the other system processing what they think they should do based off of those sensory cues to take whatever action will position them in a way that they are still protected by the refuge (even in the absence of predators).

In the future, robots that are programmed to process and react to sensory cues this way could be extremely helpful to humans. Whether they are android replicants crying tears in the rain remains to be seen.