Create a free profile to get unlimited access to exclusive videos, sweepstakes, and more!

NASA’s new AI can stare at the sun without shades - and without damaging its vision

When you were a kid, were you ever told not to look directly into the flaming eye of the Sun? It can be almost as dangerous for solar telescopes.

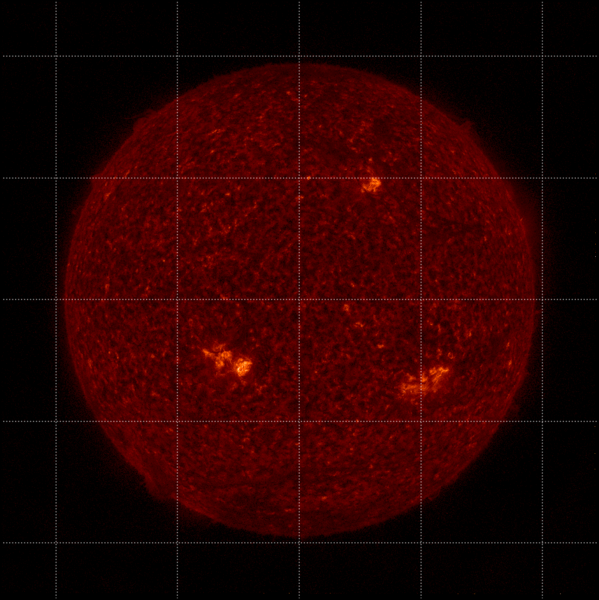

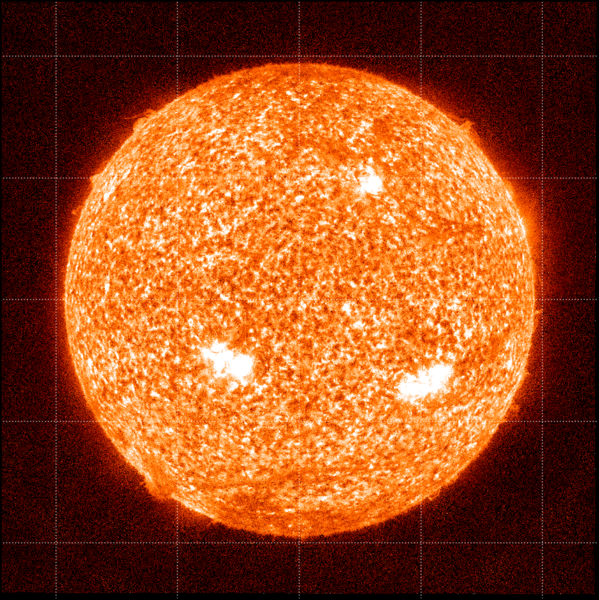

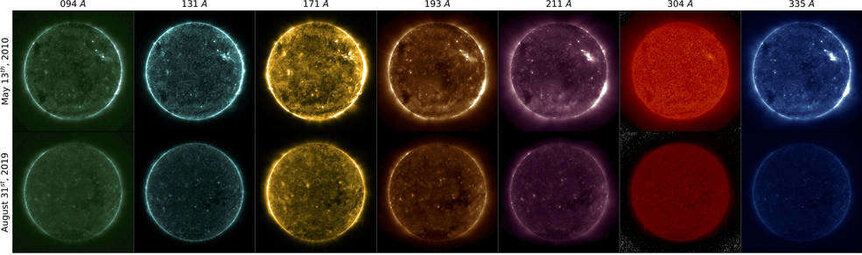

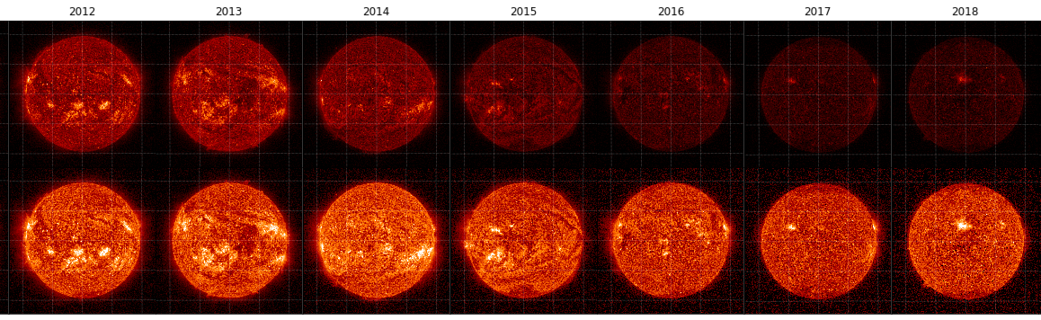

The Atmospheric Imagery Assembly or AIA has been staring right into those flames for over a decade aboard the Solar Dynamic Observatory (SDO). AIA can see in 3 UV wavelengths and 7 extreme UV (EUV) wavelengths, and anything in the UV range is too short for the human eye. AIA has to suffer for science. The intense light it faces degrades its instruments. Because of this, a sounding rocket flies new AIA instruments to the SDO every year.

Sounding rockets also observe the Sun and compare their observations to what AIA sees. Unfortunately, they aren’t exactly the answer, either. They do calibrate AIA images and let scientists back on Earth know how much those images need to be corrected, but are expensive to launch and unable to calibrate images continuously. Another downside is that deep space missions cannot use them so far from Earth.

These setbacks inspired solar physicist Luiz Dos Santos of NASA Goddard to create an AI that can do the same thing — but keep going. He led a study recently published in Astronomy & Astrophysics.

“The artificial intelligence we developed is a machine learning model which identifies how much calibration AIA data needs,” Dos Santos told SYFY WIRE. “After the AIA produces an image from the Sun, our model identifies how degraded this image is and how much correction it needs, enhancing and expanding the capabilities of AIA and possibly future missions.”

On the Sun, things often show themselves in more than one wavelength. Each of AIA’s 8 cameras observes in two wavelengths. It has allowed scientists to observe the solar outer atmosphere, or corona (which is mysteriously hotter than the surface), like never before. Phenomena from flares to solar rain occur in the corona. AIA data is meant to give more insight into the physics behind these phenomena and their impact on space weather that can mess with our power grid and satellites, and emit radiation hazardous to astronauts.

The AI program for AIA was conceptualized and developed at Frontier Development Lab, where AIA Principal Investigator and study co-author Mark Cheung first brought up the issues with AIA instruments degrading. It was then that Dos Santos and his team searched for the type of AI program whose capabilities were most in line with accomplishing what they had in mind. They wanted an algorithm that would be able to see what AIA sees, figure out what needs to be corrected because of degradation and regularly beam that information back to Earth.

“We trained the model using single- and multi-wavelenth observations,” said Dos Santos. “After the training, we observed improved performance when using the multi-wavelength trained model. We induced it to ‘pay attention’ to the structures across different wavelengths instead of just a single wavelength.”

Seeing in multiple wavelengths also gives the computer brain an advantage when it comes to making sense out of how AIA sees the same solar structures in a literal different light. It had to be taught to recognize what an image of a solar flare looked like without degradation in order to determine the level of degradation reflected in AIA’s images, and therefore, how much calibration was needed. Dos Santos checked the algorithm’s results against those from a sounding rocket. The AI had succeeded at identifying what it was programmed to.

“Our AI allows for a correct brightness intensity value of solar images and opens a new section of studying spectrometry using AIA images, which was previously impossible due to the degradation,” he said. “It will also allow the calibration of EUV images in deep space missions.”

Obviously, this tech will not stop at the Sun. The researchers want to see the model used with other spacecraft, especially STEREO (Solar Terrestrial Relations Observatory) and the Solar Orbiter. In the meantime, keep your shades on.