Create a free profile to get unlimited access to exclusive videos, sweepstakes, and more!

Telepaths beware: Science is figuring out how to turn thoughts into words

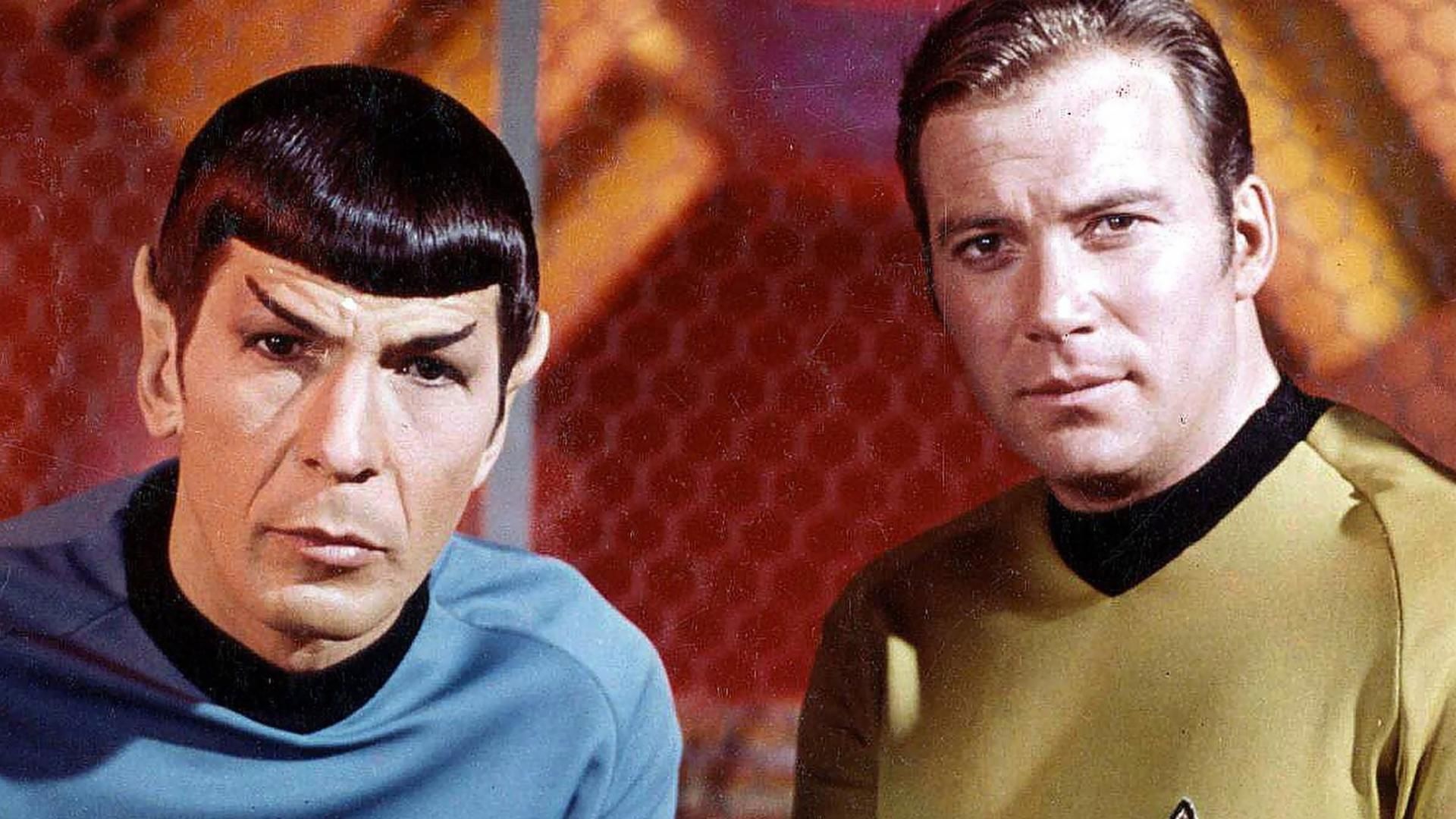

Where’s the fun in having a super-rare paranormal gift if science is going to come along and show everyone else how it all works? In a breakthrough that might even get the attention of a touch-telepathic Star Trek Vulcan, researchers have devised a way to convert brain waves into speech.

Scientists at Columbia University’s Neural Acoustic Processing Laboratory published their findings at Nature this week after developing and testing a new way of processing brain patterns when a listener is hearing another’s speech. Using a brain-computer interface (BCI) that is able to reconstruct observed speech by using a deep neural network to collect signals from the human auditory cortex, the team was able to successfully reconstruct a speaker’s words based on nothing more than the signals that the listener’s brain waves were emitting.

Admittedly, that’s more than one step short of outright Vulcan telepathy — or, as the researchers put it, it’s the difference between the detection of overt (spoken and overheard) and covert (private) thoughts. But, they explain that “[r]econstructing speech from the human auditory cortex creates the possibility of a speech neuroprosthetic to establish a direct communication with the brain and has been shown to be possible in both overt and covert conditions.”

What’s a “speech neuroprosthetic?” It’s an interface between human and machine; in this case, it’s the BCI — the meeting between the brain’s signals and the technology required to interpret and recognizably repeat it. By applying “recent advances in deep learning with the latest innovations in speech synthesis technologies,” the group used invasive electrocorticography (ECoG) to assess the neural activity of epileptic patients “as they listened to continuous speech sounds.”

To produce the audio to play back what the patients had merely heard someone else say, the team combined the data-crunching abilities of an auditory spectrogram (a visual representation of auditory signals) with a speech vocoder. ”The DNN [deep neural network]-vocoder combination achieved the best performance (75 percent accuracy), which is 67 percent higher than the baseline system,” and even allowed the outside observers, most of the time, to detect whether the original speakers were male or female, the findings state.

In practical terms, the emerging technology could assist people with motor disabilities whose cognitive abilities remain intact (the late Stephen Hawking, who lived with ALS, is perhaps the most famous example.)

With further refinement, the new approach “opens up the possibility of using this technique as a speech brain-computer interface (BCI) to restore speech in severely paralyzed patients,” the researchers state. “The ultimate goal of a speech neuroprosthesis is to create a direct communication pathway to the brain with the potential to benefit patients who have lost their ability to speak.”

We’re all for that — and we can’t wait until the tech has progressed even further, so we can finally start mind-melding just like Spock.