Create a free profile to get unlimited access to exclusive videos, sweepstakes, and more!

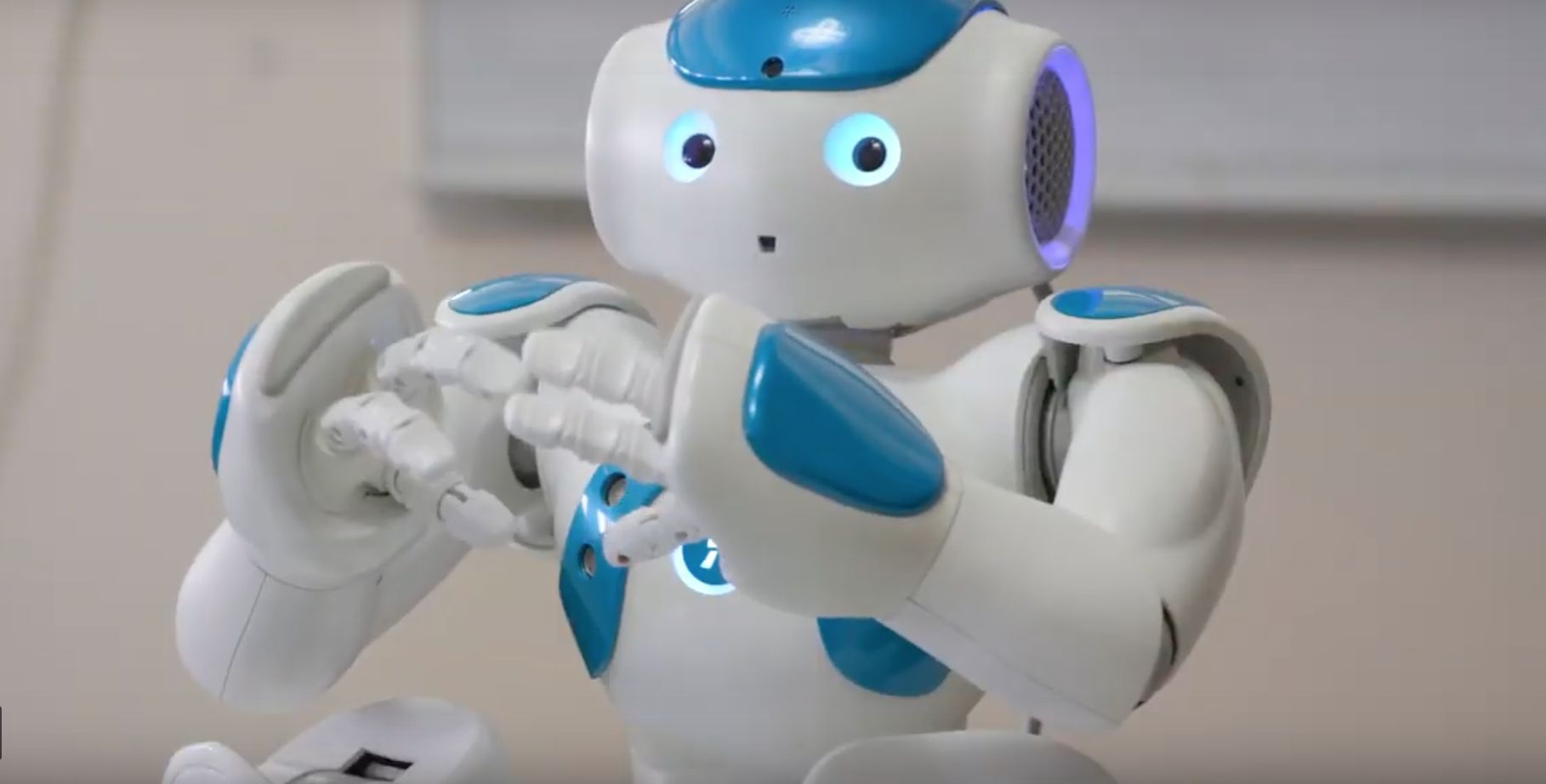

Game-playing robot aids human interaction by admitting mistakes

As next-generation personal robots, digital voice assistants like Apple's Siri, and interactive Artificial Intelligence devices similar to Amazon's Alexa become more a part of humans' daily lives, their ability to interact with individuals and groups can be fostered by machines admitting mistakes and learning the simple act of humility.

In a new study published last week in the Proceedings of the National Academy of Sciences, researchers have demonstrated that a robot’s social behavior does influence the conversational dynamics between human members of the human–robot group in a positive direction, revealing the proficiency of a robot to genuinely shape human–human interaction.

By forming a team of three humans and one robot, graduate students at Yale University's Institute for Network Science (YINS) used game-playing tactics involving a tablet-based test which required all participants to succeed or the entire group would lose. When the robot would randomly fail and emotionally express itself with regret, sorrow, or vulnerability, it encouraged a much greater range of communication between the team than when the losing bot remained silent or expressed random statements such as tallying the score.

“We know that robots can influence the behavior of humans they interact with directly, but how robots affect the way humans engage with each other is less well understood,” said Margaret L. Traeger, a Ph.D. candidate in sociology and lead study author. “Our study shows that robots can affect human-to-human interactions. In this case, we show that robots can help people communicate more effectively as a team.”

While data on interfaces between robots and humans on a one-to-one basis has been compiled in previous studies, very little is known as to its insertions into group situations. The Yale study concludes that a robot’s social behavior does influence the conversational dynamics with vulnerable statements made by the unsuccessful robot after each round of the game.

Triggered vocalizations were of three varieties: self-disclosure, personal story, and humor. In self-disclosure mode the robot offered: “Sorry guys, I made the mistake this round. I know it may be hard to believe, but robots make mistakes too.” Personal stories consisted of the robot sharing personal information about earlier experiences like: “Awesome! I bet we can get the highest score on the scoreboard, just like my soccer team went undefeated in the 2014 season!” Vulnerable expressions inserted with a dash of humor carried the additional emotional weight of admitting a flaw, such as saying: “Sometimes failure makes me angry, which reminds me of a joke: Why is the railroad angry? Because people are always crossing it!”

“We are interested in how society will change as we add forms of artificial intelligence to our midst,” explained Nicholas A. Christakis, Sterling Professor of Social and Natural Science. “As we create hybrid social systems of humans and machines, we need to evaluate how to program the robotic agents so that they do not corrode how we treat each other.”

Learning how robots interact and influence emotions and clear communication within human spaces will be of paramount concern as technology advances and intelligent machines are further integrated into society and industry, generating cooperation, social harmony, and smooth interpretive experiences for both robots and people.

Now, shall we play a game?